Using AI agents to do months of work in a weekend

On this page

Like a lot of OSS maintainers, I was underwater: hundreds of issues, feature requests stacked for years, and a constant “I should get to that” guilt loop.

It’s hard to prioritize – there’s lots of other stuff to get done – but you always feel guilty for it.

This summer, I decided to do something about it. I’ve been working heavily with AI agents, and I knew I’d be able to at least knock out a good few issues over a weekend.

That feels like an understatement now.

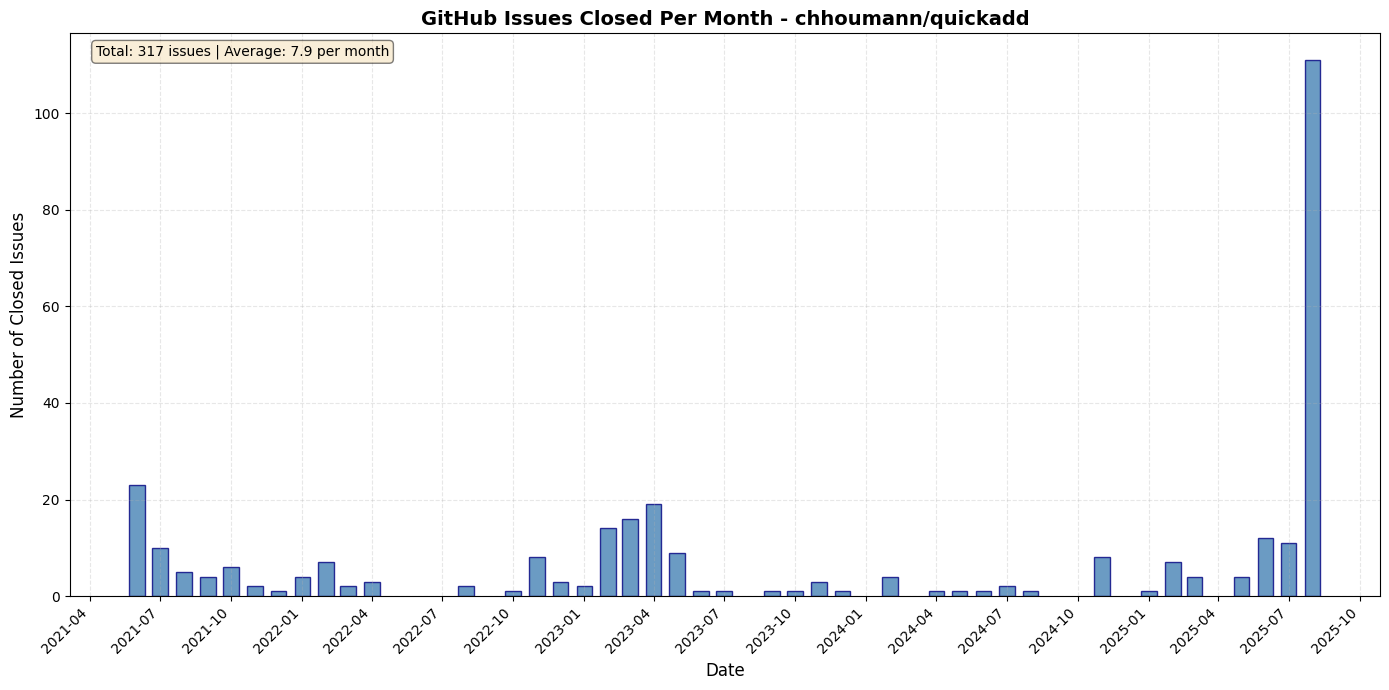

I resolved 85 issues in a weekend, with 57 issues closed on Friday alone. August isn’t over, and the current tally is at 111 issues closed this month. And that’s without trying very hard – only working a few hours on some days.

I’m not sharing numbers to brag (they aren’t that great), but to share what’s possible with little effort.

This post breaks down exactly what I built, how I used it, and some takeaways.

It all started by creating custom, AI-powered triage bot that massively accelerated how long it takes for me to process an issue.

The triage bot

I built claude-github-triage, a tool that uses Claude Code SDK to automatically analyze every issue in the context of the entire codebase.

If you have Claude Max ($200/mo), you get generous limits. That made this both fast and cheap to run at scale.

Before, I’d manually load the repo into my head for each issue: is it still relevant, where would it live, what code paths are involved, etc. Now the bot does that pass for me and I skim its output. Issue processing dropped to ~5 minutes on average.

The triage bot gives Claude access to the entire repository for each issue analysis.

This means Claude can:

- Search for relevant code implementations

- Check if similar functionality already exists

- Identify duplicate issues by understanding the actual codebase

- Suggest specific files that need modification

Each issue gets a structured analysis:

- Should Close: Yes/No + why

- Labels: Suggested labels

- Confidence: High/Medium/Low

- Analysis: In-depth reasoning about the issue

- Suggested Response: Draft reply to the author

Here’s an example output:

=== TRIAGE ANALYSIS START ===

SHOULD_CLOSE: No

LABELS: enhancement, UI/UX, good-first-issue

CONFIDENCE: High

ANALYSIS:

This feature request for dark mode is valid and aligns with modern application standards.

The codebase already has a theming system in src/themes/base.ts that could be extended.

Implementation would require:

1. Adding dark theme variables to the theme system

2. Creating a toggle component in the settings panel

3. Persisting theme preference to localStorage

This is well-scoped and would benefit many users based on similar requests (#45, #78).

SUGGESTED_RESPONSE:

Thank you for this feature request! Dark mode is indeed valuable and I can see

from the codebase that we have the infrastructure to support this. The theming

system in src/themes/ just needs to be extended with dark mode variables.

I'll prioritize this for the next release.

=== TRIAGE ANALYSIS END ===I considered turning it into more structured data, by doing a structured-output pass after the analysis, but decided against it. The above worked just fine.

The bot maintains a metadata file tracking which issues have been triaged. It automatically skips already-processed issues unless you use the --force flag. This means you can run it repeatedly without wasting API calls.

This also helped resume processing when I hit rate limits.

If you’re wondering why exactly I chose to build the SDK-based workflow, it’s because I don’t think just letting some kind of AI agent loose in the repository and trying to have it go through every issue by manually prompting it to do so would work. Deterministically injecting the context of the issue and anything else that might be relevant, and then handling one issue at a time seems to work the best.

The playbook I used

Phase 1: Mass triage

Run it on every open issue:

bun src/cli.ts triage -o chhoumann -r quickadd \

-p ~/projects/quickadd \ # So Claude Code uses the folder as context

--concurrency 3I hit my Claude Code Max limits multiple times.

Worth it: every issue got a contextual read and clear next steps.

Beyond triage, I added a lightweight review loop:

# View your triage inbox

bun src/cli.ts inbox --filter unread

# Review unread issues one by one

bun src/cli.ts review

# Mark issues as read after handling them

bun src/cli.ts mark 123 --read

# Or: Sync with GitHub to mark closed issues as read

bun src/cli.ts sync -o owner -r repoIt feels like an inbox. You process, mark done, move on.

Phase 2: Quick Wins

I reviewed the triage results and immediately closed:

- Duplicate issues (the bot identified these by analyzing actual code & using the

ghcli) - Outdated requests (features that were already implemented)

- Issues that were out of scope

Phase 3: Implementation

For the remaining issues marked as valid enhancements or bugs, I got to work coding up solutions using my standard AI-coding workflow. See How I use Claude Code (opens in a new tab).

I mostly used Amp (opens in a new tab) by Sourcegraph here. Using Claude Code would probably have been cheaper, but Amp just works great, and I really enjoy using it. And the Oracle is great for design.

My current favorite tools for AI-coding are: Amp, Claude Code, Codex CLI, and Cursor.

As for models: GPT-5-high, Claude Opus 4.1, Sonnet 4.

The analysis from the triage bot wasn’t spot on every time, but useful roughly 8/10 times. That, combined with my own understanding of the codebase and using an agent to code, made this phase much faster.

Takeaways

There’s no excuse anymore to not have an agent run every time you get an inbound issue, ticket, or whatever request. It’s practically free compared to how much time it saves you.

My current goal with AI is finding ways to embrace exponentials (opens in a new tab).

How can I build systems to compound my effectiveness?

And to build the system that builds the system.

Building is now table stakes. The moat is taste.

As the cost of code falls toward zero, the scarce resource is judgment. LLMs let you ship almost anything; the work is deciding what deserves to exist and what should be cut.

Processing 100 issues at 90% accuracy beats carefully handling 10 issues at 100% accuracy. You can always refine.

The goal was never to make a perfect triaging system that can handle every case perfectly. Shipping fast and getting something out the door, that’s the goal.

I spent very little time adjusting the prompts. Once they were good enough, I set the bot loose and got to work.

Getting Started

Want to try this yourself? I’d encourage you to create your own solution. But you’re free to take a look at mine & find inspiration!

If you plan to try this, beware prompt injection attacks on your GitHub issues. Use permission systems to prevent the bot from sharing secrets or performing malicious actions. Put the bot in a sandbox.

Resources:

- GitHub: claude-github-triage (opens in a new tab)

- QuickAdd Plugin: chhoumann/quickadd (opens in a new tab)

- Related: How I use Claude Code - My complete guide to AI-powered development

- Claude Code SDK: Documentation (opens in a new tab)